AI is now approving loans, flagging fraud, pricing insurance, and scoring risk across India’s financial system — faster than any human committee could. But when the machine makes the wrong call, customers still demand human accountability. Regulators do too.

That is becoming the defining governance challenge for BFSI in 2026.

Across banks, insurers, NBFCs, and fintechs, AI has quietly moved from experimentation to operational infrastructure. It is embedded inside underwriting engines, fraud-monitoring systems, KYC workflows, claims processing, and investment operations. Yet the governance systems surrounding these models remain uneven, fragmented, and in many cases, immature.

The result is a growing accountability gap.

Somewhere in India today, a loan application is being rejected by an algorithm. The applicant does not know why. The frontline employee cannot fully explain the decision either. The model — trained on years of historical financial data and optimised for risk efficiency — has become a black box. And when the complaint escalates, the final question lands not on the machine, but on a human desk:

Who is responsible?

That question now sits at the centre of the Indian financial sector’s AI debate.

RBI’s message to BFSI: accountability cannot be outsourced

The turning point came in August 2025, when the Reserve Bank of India released its landmark FREE-AI framework — Framework for Responsible and Ethical Enablement of Artificial Intelligence. The framework outlined seven guiding principles and 26 recommendations designed to balance AI-led innovation with governance, transparency, and systemic trust.

The signal from the regulator was unmistakable: the era of “deploy first, govern later” is ending.

The framework explicitly states that AI should augment human judgment, not replace it entirely. Institutions remain accountable for outcomes, irrespective of automation levels or third-party AI vendors, says a KPMG report on framework for responsible and ethical enablement of Artificial Intelligence.

This matters because much of BFSI’s AI deployment today depends heavily on external vendors, embedded models, and outsourced infrastructure. But regulators are making it clear that accountability does not transfer with the technology contract.

The board remains responsible.

BFSI’s real challenge is no longer adoption — it is governance

The real challenge now is whether organisations can innovate at market speed while building governance systems strong enough to withstand regulatory scrutiny and consumer distrust.

And right now, the gap is widening.

According to findings referenced in the RBI’s FREE-AI discussions, nearly 67% of Indian financial institutions are exploring AI adoption, but only a small proportion have operational maturity around governance, auditability, and post-deployment oversight. Fewer than 15% reportedly conduct systematic monitoring for model drift, bias, or degraded performance after deployment.

That creates a dangerous asymmetry: institutions are accelerating deployment faster than they are building accountability infrastructure.

This is where the leadership challenge becomes existential.

Because AI failures in BFSI are not merely technical errors. They are reputational, regulatory, and trust failures. A biased underwriting model, a false fraud flag, or an opaque insurance pricing engine can quickly escalate into customer backlash, regulatory intervention, or systemic risk concerns.

And unlike software glitches, accountability cannot be automated away.

Why HR is suddenly part of the AI governance conversation

The framework’s “Capacity” pillar pushes institutions to build AI governance literacy across the organisation — not just inside specialist data science teams, according to the KPMG report.

That changes the role of HR fundamentally.

Regulators are no longer only asking whether institutions have deployed AI responsibly. They are beginning to ask whether organisations have the human capability to supervise these systems effectively.

Who can interrogate an algorithmic decision?

Who can explain model outputs to customers?

Who is monitoring for bias?

Who escalates governance risks?

Who owns accountability when automation fails?

These are no longer purely technology questions. They are organisational capability questions.

And many BFSI institutions are discovering that their talent architecture was built for a different era — one defined by stable job descriptions, siloed expertise, and predictable operating environments.

That world no longer exists.

The compliance leader of 2026 now needs AI literacy. Risk leaders need to explain model drift to boards in plain language. Credit managers need to challenge machine recommendations critically rather than accept them passively.

Most institutions have not yet redesigned roles, capability frameworks, or leadership pipelines around that reality.

The middle-management risk nobody is discussing enough

Middle managers are increasingly becoming the operational bridge between AI systems and regulatory accountability. They are expected to oversee automated decisions, interpret risk signals, escalate anomalies, and maintain governance discipline.

But in many organisations, they remain underprepared for the complexity of AI-led operations.

This creates a structural risk.

Because governance frameworks succeed or fail not in boardrooms, but in day-to-day execution. If managers overseeing AI systems lack the authority, capability, or confidence to challenge flawed outputs, accountability mechanisms collapse in practice — even if they exist on paper.

The governance conversation in BFSI therefore cannot remain confined to policy documents and technology teams. It has become a workforce transformation issue.

Build, buy, borrow — or replace with bots?

Underneath the governance debate lies another uncomfortable workforce question: when should BFSI firms build AI capability internally, when should they hire externally, and when are they simply replacing human judgment with automation?

The pressure to automate is enormous.

GenAI productivity gains are already influencing boardroom decisions around cost optimisation, operational efficiency, and workforce design. Globally, financial institutions are piloting increasingly autonomous ‘agentic AI’ systems capable of making real-time operational decisions, as reported by Reuters in an article.

But governance frameworks for such systems are still evolving worldwide.

And that creates a profound leadership dilemma.

Because replacing repetitive tasks with AI is relatively straightforward. Replacing judgment is not.

In highly regulated industries like BFSI, institutions still need capable humans who can intervene when systems fail, challenge outputs when they appear unreasonable, and carry legal and ethical accountability for decisions made at scale.

The real risk is not AI replacing people.

It is organisations deploying AI faster than they are building humans capable of governing it.

The next competitive advantage in BFSI may be trust

As AI systems are increasingly deployed in credit underwriting, fraud detection, insurance pricing, and risk scoring, financial institutions are being pushed toward a new expectation: decisions must be explainable, auditable, and accountable.

Regulators are explicitly reinforcing this shift. The RBI’s FREE-AI framework emphasizes that institutions remain fully accountable for AI-driven outcomes, and that AI systems must support transparency and human oversight throughout their lifecycle.

This is reinforcing a core requirement across financial regulation globally — that automated decisions must be traceable and explainable in case of customer grievance, audit, or regulatory review.

As BFSI systems become more automated, customers increasingly experience decisions without direct human interaction — approvals, rejections, pricing changes, and fraud flags are often triggered by algorithms embedded within digital systems. This makes transparency in decision logic and grievance redressal processes more critical than before.

Regulatory frameworks such as RBI’s FREE-AI principles, alongside global approaches like MAS FEAT (Fairness, Ethics, Accountability, and Transparency), reinforce that trust in financial systems depends on the ability to demonstrate fairness, explain outcomes, and maintain accountability even when automation is involved.

In this environment, BFSI institutions are being required to strengthen model governance, auditability, and explainability mechanisms — not just for compliance, but to maintain confidence in AI-driven financial decision-making.

The leadership conversation BFSI can no longer postpone

These questions are now moving from policy papers into boardrooms, regulator discussions, and talent strategies across the industry.

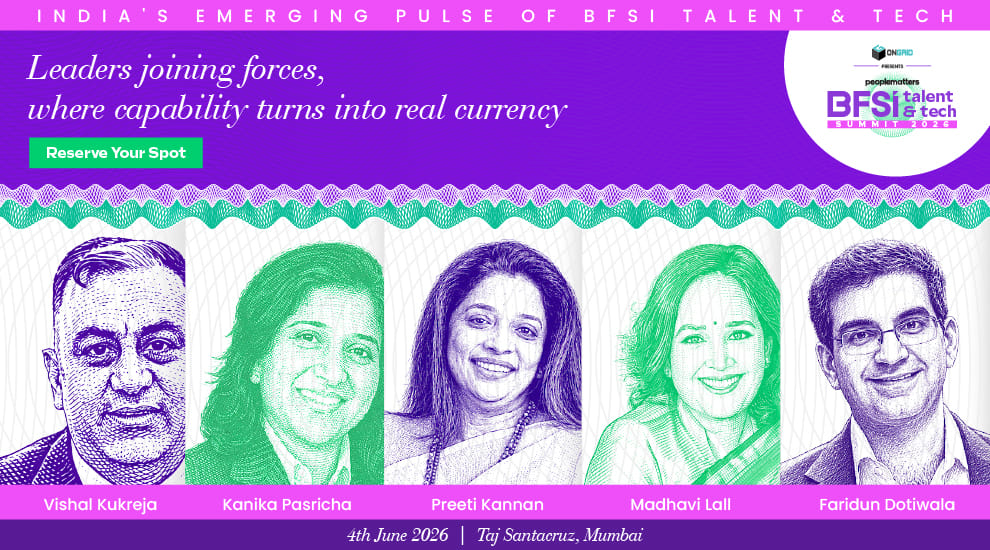

The upcoming People Matters BFSI Tech & Talent Summit 2026 scheduled for June 4th, 2026 at Taj Santacruz in Mumbai, will bring together senior BFSI, fintech, and HR leaders shaping the future of AI governance, workforce transformation, and leadership capability.

Among the key speakers at the summit are:

Vishal Kukreja CHRO, RBL Bank

Sandeep Chaudhary, CEO, PeopleStrong

Preeti Kannan, President & CHRO, IIFL Finance

Madhavi Lall, Managing Director, Head - HR India, Deutsche Bank

Faridun Dotiwala, Partner, McKinsey & Co

Kanika Pasricha, Chief Economic Advisor, Union Bank of India

Key conversations at the summit will explore:

balancing innovation speed with governance discipline,

redesigning talent architecture for AI oversight,

building middle-management capability for AI-era operations,

and preparing succession pipelines for a future where leadership requires both regulatory judgment and AI fluency.

As AI reshapes the financial sector, one reality is becoming impossible to ignore:

The algorithm may make the decision.

But accountability will always remain human.

Sign up today for BFSI Talent and Tech Summit 2026 to explore deeper perspectives on the evolution of BFSI leadership, workforce shifting, and AI oversight.