AI & Emerging Tech

Staying Human Among Algorithms

Are we moving towards a society where one group will write algorithms and the rest will be ruled by them?

Software systems, in some cases, can be so efficient at screening resumes and evaluating personality tests that 72 percent of resumes1 are weeded out before a human ever sees them. When it comes to candidates getting past the algorithms in companies, what do they do to beat hiring algorithms?2 They use multiple resumes with multiple keywords. Some innovative ones put keywords in white on the resume that are invisible to the human eye but are read by machines. Machines are great at following rules; humans are ingenious when it comes to breaking rules.

Machines are great at following rules; humans are ingenious when it comes to breaking them.

Algorithms are codified biases

Algorithms speed up our propensity to take decisions when the choices are not strikingly different from each other. As a report from Pew Internet says rightly, “(algorithms) put too much control in the hands of corporations and governments, perpetuate bias, create filter bubbles, cut choices, creativity and serendipity, and could result in greater unemployment.” After all they are only opinions expressed in code. They carry all the biases of the ones coding and the bias of the data-sets that they draw upon.

Gender is not the only bias

The Economist speaks of the challenges of ads promoting jobs in science, technology, engineering, and math on Facebook. “They found that the ads were less likely to be shown to women than to men. This was not due to a conscious bias on the part of the Facebook algorithm. Rather, young women are a more valuable demographic group on Facebook (because they control a high share of household spending) and thus, ads targeting them are more expensive. The algorithms naturally targeted pages where the return on investment is highest: for men, not women.”

Knowing how algorithms work is an essential part of getting hired. Given the scale, importance and secrecy of hiring algorithms, they have the potential to create a category of people who will get rejected and never know why. There are algorithms that find out the weather forecast and only then decide on the work schedule of thousands of people.

Everywhere and yet invisible

Search engines, the apps on your phone, dating sites, job sites, news sites, shopping sites, travel sites — all run on algorithms. Computer and video games are stories told through algorithms.

Every time that you click on a button, the action becomes a data point that an algorithm could use to make your life more efficient. 125 million households watch Netflix3 for more than two hours a day on average. Each pause, rewind, or downloaded but unwatched movie is a data point that is used to classify the viewer into one of the 2000 “taste clusters” that Netflix uses. They encourage every family member to create their own profile so that even within a family, the minor variances in viewing habits can be used to make their algorithm more efficient in making recommendations that are harder to resist. Shoshana Zuboff, an author and the Charles Edward Wilson Professor of Business Administration at the Harvard Business School (retired), calls it “surveillance capitalism”4, where companies know so much about you that they can nudge you to buy more when you can least afford it, or binge watch a serial and get sleep deprived just before an exam. Sarah Kessler, the deputy editor at Quartz and an author writes in Gigged5 as to how Uber employs hundreds of social scientists and data scientists to help manage the drivers through their app. They use video game techniques, graphics, and non-cash rewards to nudge drivers to work more hours.

Efficient but not humane

We as humans need to prioritize humility rather than algorithmic efficiency. One of the budget airlines I travel in asks its passengers to declare the weight of their baggage in advance. At the terminal, if the baggage exceeds the estimated weight even by a kilogram, the excess baggage penalty is severe. Watching an old lady in a wheelchair plead with the airline to waive off the penalty is heartbreaking. It is surely efficient, but certainly not humane.

Take the case of Xerox. Their algorithms found that job applicants with long commutes were more likely to churn. But Xerox managers6 noticed another correlation — the link between the people with long commutes and poor neighborhoods. So Xerox, to its credit, removed that highly correlated churn data from its algorithm, although the company did scarify a bit of efficiency for humaneness.

Just as we care if our food is organic or not, or if our shoes were manufactured in a factory or the result of child labor, we must start asking questions about how algorithms are being supervised.

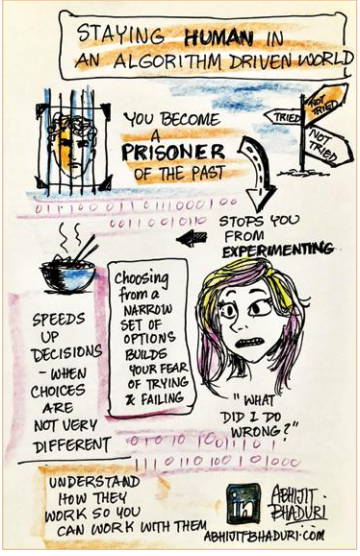

The value of getting lost

Algorithms make us a prisoner of the past. Algorithms serve a filtered set of choices based on a certain profile – the elements of which can vary – for example, demographic or other statistical profiles. Once we make a choice, it has more data points to limit the options that we can choose from. It stops us from experimenting. We start choosing from a narrow set of options and negate the existence of other possibilities. Relying on algorithms lowers the abilities of the general population to make decisions.

Algorithms assume that we will follow the patterns we have created through our decisions. Humans have to use their creativity to try what they never have.

Curiosity is the antidote to bypassing algorithms — the more unpredictable your choices are, the lesser control machines will have.

If we are not careful, we will move towards a society where one group will write algorithms and the rest will be ruled by them. That is the biggest value of getting lost.

References

Author

Loading...

Loading...